We’ve all been there. You’re out in the field, the light is hitting the peaks just right, or your subject is doing something truly epic, and you nail the composition. You press the shutter, feeling like a total pro. Then you get home, pull the RAW files up on a big screen, and realize the focus is just a hair off. Maybe it’s on the tip of the nose instead of the eye, or the foreground flower is sharp but the mountain range is a blurry mess because your depth of field was too shallow.

It’s the age-old struggle of photography: physics vs. reality. For over a century, we’ve been slaves to the glass. If you wanted everything in focus, you had to stop down your aperture, which meant losing light or dealing with diffraction. If you wanted that creamy bokeh, you had to sacrifice focus on everything else.

But according to the latest photography news, those days are officially numbered. Enter the era of Computational Autofocus.

This isn't just a minor firmware update for your favorite mirrorless camera. We are talking about a fundamental shift in how light is captured and processed. If you’ve been following the trends, you know that AI-integrated mirrorless cameras are already changing the game, but computational autofocus is the piece of the puzzle that makes the "perfect shot" an almost guaranteed reality.

What Exactly is Computational Autofocus?

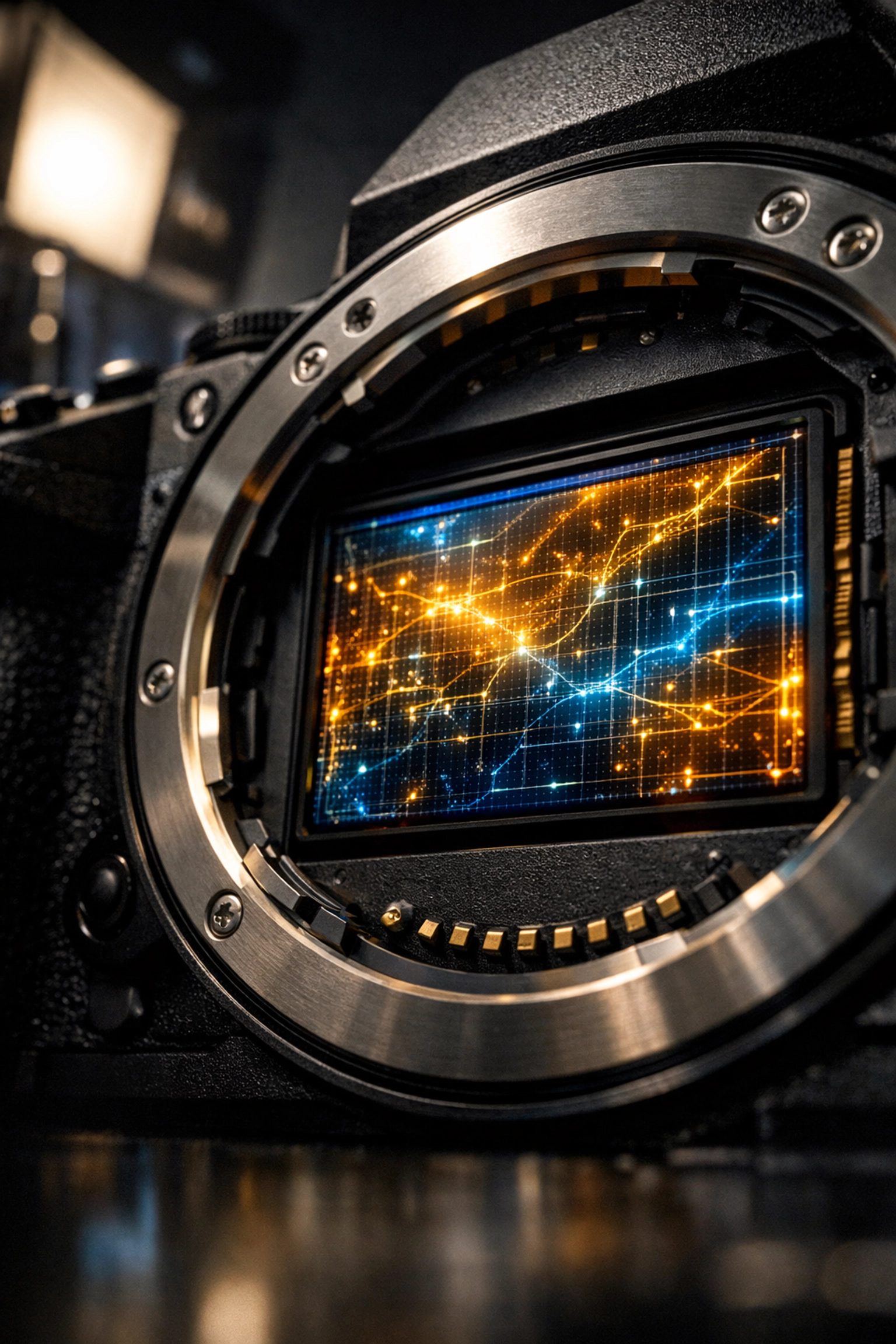

In simple terms, computational autofocus (CAF) is the marriage of traditional optics and heavy-duty math. Instead of relying purely on a physical lens element moving back and forth to find a single plane of focus, CAF uses algorithms to reconstruct an image where multiple points, or even the entire scene, are in sharp focus simultaneously.

Think of it like the difference between a standard calculator and a supercomputer. Traditional autofocus is the calculator; it does one thing at a time. Computational autofocus is the supercomputer, calculating millions of variables per second to ensure that every pixel in your frame is doing exactly what it's supposed to do.

The "Lohmann Lens" Breakthrough: The End of Focus Stacking?

Recent research has introduced something called a "computational lens." This isn't your standard piece of curved glass. It’s a hybrid system using a Lohmann lens, two cubic lenses that shift against each other, combined with a phase-only spatial light modulator.

Wait, don’t fall asleep on me. I promised simple, didn't I?

Here is the "explain like I’m five" version: Imagine if your camera lens could bend light differently for every single pixel on the sensor. Instead of one lens focusing on one distance, each pixel basically gets its own tiny, adjustable lens. This allows the camera to see different parts of the image at different depths all at once.

For landscape photographers, this is the "holy grail." No more carrying heavy tripods just to perform focus stacking. You won't need to take five different shots at five different focus points and blend them in Photoshop later. You just click once, and the computational engine handles the rest. If you are just starting out and still trying to grasp the basics, check out our Manual Mode 101 guide to see how we used to do it in the "old days."

How It Works: The Blend of CDAF and PDAF

To make this magic happen, the system uses two main types of autofocus that you might already be familiar with, but it cranks them up to eleven:

- Contrast-Detection Autofocus (CDAF): This method looks at the edges and textures in an image. When the contrast is highest, the image is in focus. CAF takes this further by dividing the image into thousands of tiny regions and optimizing them independently.

- Phase-Detection Autofocus (PDAF): This is the speed demon. It uses dual-pixel sensors to look at two versions of the same image and calculate exactly how far the lens needs to move to achieve focus.

When you combine these with computational power, the system doesn't just "guess" where focus should be. It knows exactly where every object is in 3D space. Researchers have already achieved speeds of 21 frames per second on modified sensors using this tech. That is faster than most high-end sports cameras on the market today.

Why This Photography News Changes Everything for Creators

If you’re a professional or even a serious hobbyist, you might be thinking, "Edin, isn't this cheating?"

I hear that a lot. People said the same thing when we moved from film to digital, and again when we moved from DSLR to mirrorless. But here’s the thing: technology doesn't replace creativity; it removes the barriers to it.

1. Landscape Photography Redefined

In the past, getting a sharp foreground and a sharp background meant using a tiny aperture like f/11 or f/16, which often led to diffraction (loss of sharpness due to light bending). With computational autofocus, you could theoretically shoot at f/2.8 to keep your shutter speed high and your ISO low, yet still have every grain of sand and every distant star in tack-sharp focus. This is easily the fastest way to get better at landscape photography without needing to master complex post-processing techniques.

2. Action and Sports

Missing the focus on a game-winning goal or a once-in-a-lifetime wildlife encounter is heartbreaking. CAF systems can track multiple subjects with zero latency. Because the system isn't waiting for a physical motor to move a heavy piece of glass as much, the "lock-on" speed is nearly instantaneous.

3. Macro Photography

If you’ve ever tried to photograph a bug, you know that the depth of field is thinner than a piece of paper. One slight breeze and the eye of the beetle is out of focus. Computational autofocus allows for real-time deep focus, capturing the entire subject in one go.

AI Integration: The Secret Sauce

We can't talk about computational focus without talking about AI. Today's cameras are essentially computers with lenses attached. When you use software like Luminar, you’re already seeing what AI can do for your photos after you take them. AI can sharpen blurry images, recognize faces, and even replace skies.

Now, imagine that level of intelligence happening inside the camera before the shutter even closes. The AI recognizes that you’re shooting a portrait and ensures the iris of the eye is sharp, or it recognizes a car and focuses on the driver's helmet. This is why staying updated with photography tutorials is more important than ever. The tools are changing, and we need to change with them.

For those of you looking to stay ahead of the curve, I’ve been chatting with Sonny, our Social Media Manager, about how we’re going to cover these shifts. He’ll be dropping some bite-sized reels on these tech breakthroughs, so make sure you’re following us to see the real-world applications of these concepts.

Beyond the Camera: Real-World Applications

While we care most about how this helps us take better photos of our dogs or epic sunsets, the implications of computational autofocus reach far beyond our industry.

- Microscopy: Imagine a scientist being able to see every layer of a biological sample at the same time. This could speed up medical research and disease detection significantly.

- Autonomous Vehicles: Self-driving cars rely on cameras to "see" the world. If a camera can have perfect focus on a pedestrian and a distant stop sign simultaneously, the safety margins increase exponentially.

- AR/VR: For virtual reality to feel real, our eyes need to be able to focus naturally on objects at different distances. Computational optics will make VR headsets much more comfortable and immersive.

Is Traditional Photography Dead?

Absolutely not. Just because a camera can focus on everything doesn't mean it should. Photography is about choice. It’s about deciding what the viewer sees and what they don’t. We still love our bokeh. We still love the "dreamy" look of a wide-open lens.

The difference is that now, that "look" becomes a creative choice rather than a technical limitation. You can choose to have a shallow depth of field, or you can choose to have total clarity. The camera is no longer the boss of you; you are the boss of the camera.

If you're feeling a bit overwhelmed by all this tech talk, don't worry. You can always head over to PhotoGuides.org for some excellent breakdowns on gear and techniques. And if you want to see how this tech translates into fine art, take a look at edinfineart.com to see the balance between technology and vision.

How to Prepare for the Future

You don’t need to throw away your current gear just yet. However, you should start thinking about how your workflow will change.

- Master the Basics: You still need to understand composition and lighting. A sharp photo of a boring subject is still a boring subject. Check out our photography trends list to see what else is on the horizon.

- Get Comfortable with AI: Whether it’s in-camera or in post-production, AI is your friend. Tools like Luminar are great training wheels for understanding how computational power can enhance your vision.

- Invest in Education: The hardware is getting smarter, which means the "skill" of photography is shifting from "how to operate the machine" to "how to direct the machine." Stay tuned to our resources page for the latest updates.

What’s Next?

We are living in the most exciting time for photography since the invention of the digital sensor. Computational autofocus is just the tip of the iceberg. As we move closer to 2027, we expect to see these "computational lenses" hit the consumer market, likely starting in smartphones before migrating to full-frame mirrorless bodies.

Imagine a world where you never miss focus. A world where "blurry" is a choice, not an accident. That’s where we’re headed.

If you want to keep up with these fast-paced changes without spending hours reading technical white papers, we’ve got you covered. Check out today’s photography news explained in under 3 minutes. It’s the quickest way to stay informed and keep your edge in this competitive industry.

The world of photography is changing fast, but one thing remains the same: it’s all about the story you tell. Whether you’re using a vintage film camera or a futuristic AI-powered beast, the goal is to capture a moment that matters. Computational autofocus is just another tool to help you do exactly that.

For more professional insights and to see this tech in action in a studio environment, visit edinstudios.com or check out proshoot.io for top-tier production tips.

Keep shooting, keep experimenting, and don't be afraid of the math. It’s here to help. If you have questions about how this might affect your specific setup, feel free to contact us. We’re always happy to geek out over the latest gear and news.

And hey, if you're ready to make your current photos look like they were shot on the cameras of the future, head over to our shop and grab some of our Lightroom presets. They might not be a computational lens, but they’ll definitely make your work pop.